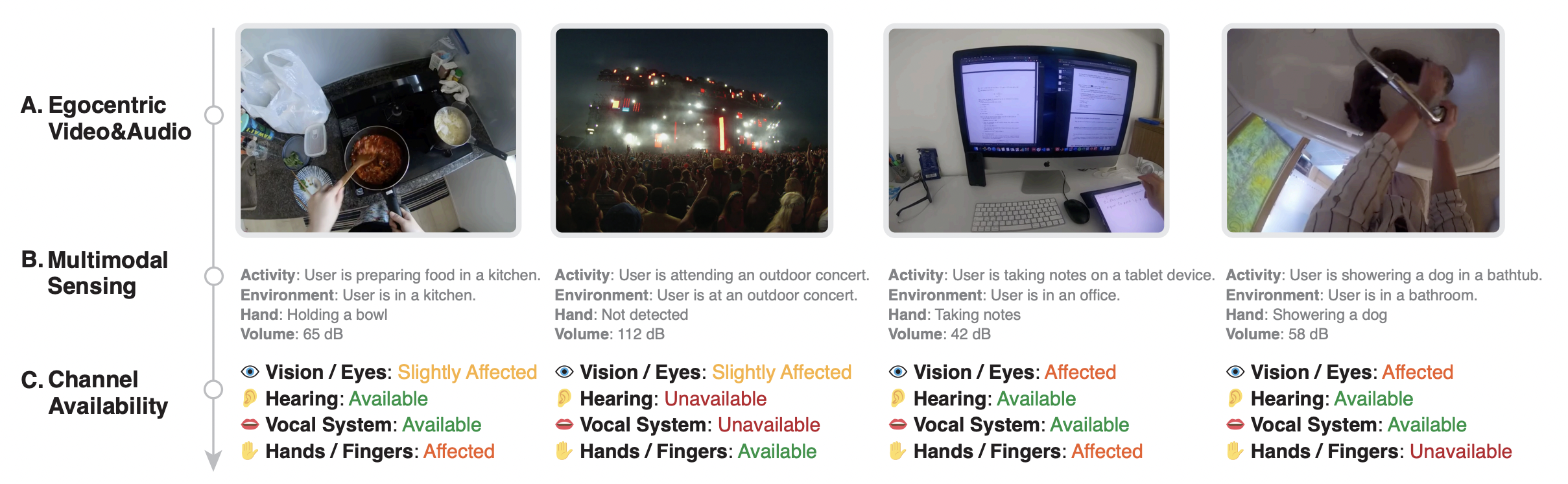

Situationally Induced Impairments and Disabilities (SIIDs) can significantly hinder user experience in contexts such as poor lighting, noise, and multi-tasking. While prior research has introduced algorithms and systems to address these impairments, they predominantly cater to specific tasks or environments and fail to accommodate the diverse and dynamic nature of SIIDs. We introduce Human I/O, a unified approach to detecting a wide range of SIIDs by gauging the availability of human input/output channels. Leveraging egocentric vision, multimodal sensing and reasoning with large language models, Human I/O achieves a 0.22 mean absolute error and a 82% accuracy in availability prediction across 60 in-the-wild egocentric video recordings in 32 different scenarios. Furthermore, while the core focus of our work is on the detection of SIIDs rather than the creation of adaptive user interfaces, we showcase the efficacy of our prototype via a user study with 10 participants. Findings suggest that Human I/O significantly reduces effort and improves user experience in the presence of SIIDs, paving the way for more adaptive and accessible interactive systems in the future.

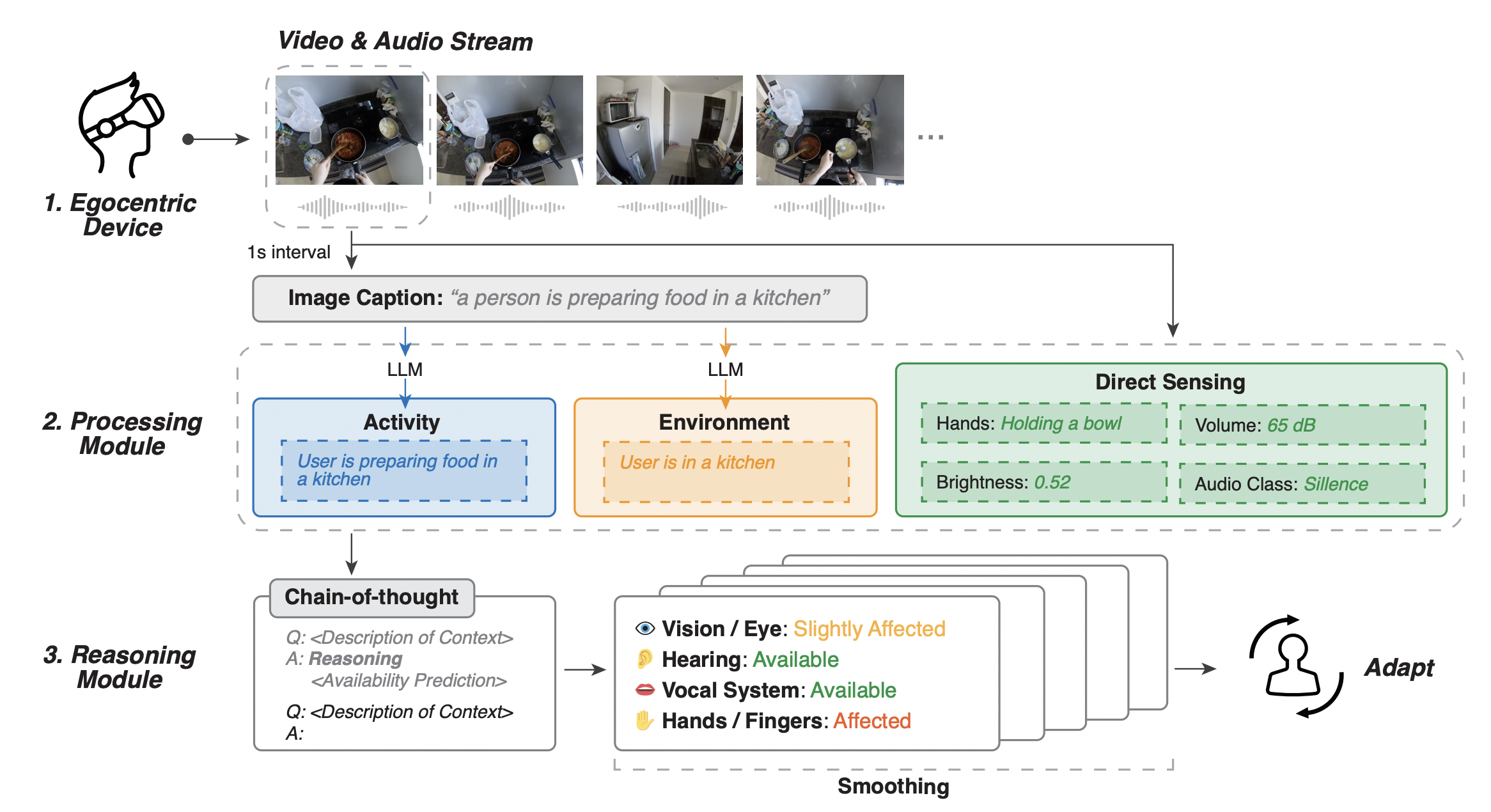

The Human I/O pipeline comprises three components: (1) an camera and microphone capturing the user’s egocentric video and audio stream; (2) video and audio data processing using computer vision, NLP, and audio analysis to obtain contextual information, including user’s activity, environment, and direct sensing ; and (3) sending contextual information to a large language model with chain-of-thought prompting techniques, predicting channel availability, and incorporating a smoothing algorithm for enhanced system stability.

@inproceedings{10.1145/3613904.3642065,

author = {Liu, Xingyu Bruce and Li, Jiahao Nick and Kim, David and Chen, Xiang 'Anthony' and Du, Ruofei},

title = {Human I/O: Towards a Unified Approach to Detecting Situational Impairments},

year = {2024},

isbn = {9798400703300},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3613904.3642065},

doi = {10.1145/3613904.3642065},

booktitle = {Proceedings of the CHI Conference on Human Factors in Computing Systems},

articleno = {965},

numpages = {18},

keywords = {augmented reality, context awareness, large language models, multimodal sensing, situational impairments},

location = {Honolulu, HI, USA},

series = {CHI '24}

}